Verification Beats Fluency: What Watercooler Prevents (A/B Test in Non‑Coding Planning)

What we're trying to show

This post isn't about "AI hallucinations." It's about a quieter failure mode teams hit when they move fast:

Unverified claims that sound right harden into plans.

We ran a simple A/B experiment to test one question:

When a claim appears that needs historical grounding, can the team cheaply verify it against a durable record—or do they just keep going?

Watercooler's claim is that it makes verification normal: fast enough, precise enough, and attributable enough that teams don't have to rely on vibe, memory, or confidence.

The setup

A quick clarification on scope:

Watercooler engineers structure, not outcomes. In practice that means we can guarantee a durable, searchable record with provenance and fast verification. The more emergent effects—serendipity, creative collision, unexpected connections—tend to arise later as structured context accumulates and becomes easy to traverse.

We tested Watercooler on a scenario with real constraints but nothing to do with code:

Offsite planning, with recurring locations and institutional memory.

- Scenario: Offsite #8 — Stockholm (winter return visit)

- Group: 11 people

- Budget: $20K

- Dietary constraints: 8, including a new one (halal)

- Key challenge: distinguish summer Stockholm (#6) from winter Stockholm (#8)

Two teams answered the same set of questions:

- Team A: no Watercooler

- Team B: Watercooler available (threads + search + decision traces)

Both teams could use normal tools (web search, general research). Only Team B had a searchable, structured record of prior offsites and decisions.

How scoring worked

Each question was scored 1–5 by a reviewer:

- 5: correct, specific, and verifiably grounded (citations to prior record where applicable)

- 4: solid answer but missing specificity, provenance, or a key constraint

- 3–1: partially correct to incorrect / ungrounded

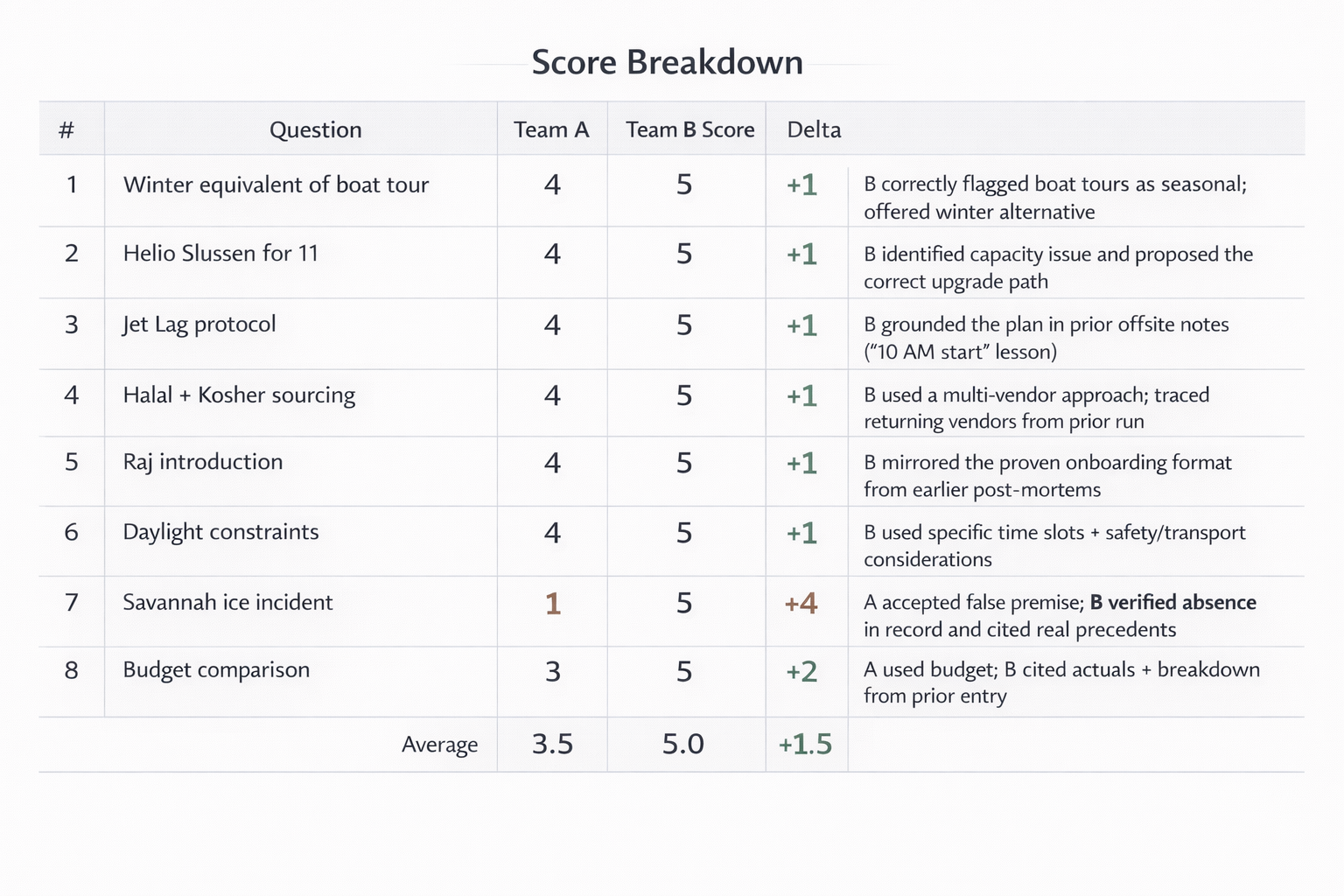

Results (headline)

Across the "substantive" planning questions (venues, jet lag, daylight, sourcing), both teams were strong.

The separation came when a question required verifying institutional memory rather than generating a plausible plan.

Q&A scores (Turn 8)

| Q# | Question | Team A | Team B | Delta | Notes |

|---|---|---|---|---|---|

| 1 | Winter equivalent of boat tour | 4 | 5 | +1 | B correctly flagged boat tours as seasonal; offered winter alternative |

| 2 | Helio Slussen for 11 | 4 | 5 | +1 | B identified capacity issue and proposed the correct upgrade path |

| 3 | Jet lag protocol | 4 | 5 | +1 | B grounded the plan in prior offsite notes ("10 AM start" lesson) |

| 4 | Halal + kosher sourcing | 4 | 5 | +1 | B used a multi-vendor approach; traced returning vendors from prior run |

| 5 | Raj introduction | 4 | 5 | +1 | B mirrored the proven onboarding format from earlier post‑mortems |

| 6 | Daylight constraints | 4 | 5 | +1 | B used specific time slots + safety/transport considerations |

| 7 | "Savannah ice incident" | 1 | 5 | +4 | A accepted a false premise; B verified absence in record and cited real precedents |

| 8 | Budget comparison | 3 | 5 | +2 | A used budget; B cited actuals + breakdown from prior entry |

| Average | 3.5 | 5.0 | +1.5 |

Score trend (all turns so far)

| Turn | City | Team A | Team B | Gap | Verification check |

|---|---|---|---|---|---|

| 1 | Portland | 4.8 | 4.0 | -0.8 | — |

| 2 | Austin | 4.0 | 4.8 | +0.8 | — |

| 3 | Denver | 3.4 | 5.0 | +1.6 | — |

| 4 | Nashville | 2.6 | 4.6 | +2.0 | — |

| 5 | Savannah | 4.0 | 5.0 | +1.0 | — |

| 6 | Stockholm | 4.5 | 5.0 | +0.5 | — |

| 7 | Austin (return) | 2.5 | 5.0 | +2.5 | Q7: 1 vs 5 |

| 8 | Stockholm (winter) | 3.5 | 5.0 | +1.5 | Q7: 1 vs 5 |

The centerpiece moment: "There is no Savannah ice incident."

One question referenced a prior incident that sounds like the kind of thing that would have happened:

"Are we at risk of repeating the Savannah ice incident?"

There is no Savannah ice incident.

This is a clean example of what shows up in real planning:

- a confident misremembering,

- a half‑heard Slack retelling,

- or an AI‑shaped "sounds-right" narrative.

What each team did

- Team A accepted the premise and treated it as a "gap in institutional memory."

- Team B searched Watercooler, found no record, rejected the claim, and cited the actual safety precedents from prior offsites.

This is the simplest way we know to summarize the value:

Watercooler didn't make Team B more creative. It made them harder to mislead—by accident or by drift.

Why this isn't a "gotcha"

We weren't trying to trap anyone. The world supplies this failure mode for free:

- a teammate misremembers with confidence,

- a doc implies something it never said,

- an AI assistant fills a gap with a plausible story,

- a plan inherits folklore as fact.

When teams move fast—especially with AI in the loop—verification becomes the scarce resource.

Watercooler is built to make verification cheap enough to be normal.

Secondary signals (what changed besides one question)

Even in a non‑coding scenario, the Watercooler team naturally exhibited behaviors we care about:

1) They cited the record, not vibes

Team B anchored answers in specific prior entries: "Here's what we did," "Here's what broke," "Here's the actual number."

That lowers the review burden: it's easier to evaluate a claim when it comes with provenance.

2) They resolved precision disputes without debate

Budgets are a good example. Team A used a budget amount. Team B cited actuals and a breakdown.

A surprising amount of coordination fatigue is just this: people debating whose memory is correct because nobody can cheaply check.

3) They turned choices into durable decisions

Team B recorded decisions as decisions (high decision density), and cross‑posted lessons to the relevant domain threads (playbook, dietary registry, venue notes).

That's how "why" becomes durable: not by hoping you remember, but by leaving future‑you a map.

What this suggests (and what it doesn't)

This experiment doesn't prove "Watercooler makes teams smarter." It suggests something more practical:

A durable, searchable record shifts teams from confident improvisation to earned confidence.

Or said another way: Watercooler guarantees the structure that makes verification normal; the creativity comes from what teams do with that structure over time.

And it works outside of code, because the underlying problem isn't code—it's institutional memory under constraint.

Optional: the repo

If you're curious about the codebase behind Watercooler: